Disinformation campaigns are prevalent during crises. Here’s how you can protect yourself.

With the COVID-19 Pandemic, Black Lives Matter protests nationwide, and the countdown to the 2020 Presidential election, there has been a flurry of online activity.

We're tweeting about these events, we're sharing news articles about them on Facebook, and we're uploading live videos as events happen during protests. These platforms are being used to communicate, to express outrage, to share what we're witnessing on the streets, to debate ideas, and to campaign for candidates.

This isn't new, of course. Social media has long been a way to get information out quickly.

"When the plane landed on the Hudson, that was one of the first events that was social media first," says Kate Starbird, associate professor in the Department of Human Centered Design and Engineering at the University of Washington. "The news went out via social media first because it was faster. People could actually see what was going on long before people could write a story about it or put it on the news."

Social media has also been lauded as a way for people to get information from a variety of perspectives — everybody can share what they see.

But, she adds, "the problem is that there is some inherent risk and vulnerabilities in getting things at that speed because speed can drive misinformation and mistakes." It's also incredibly difficult to know if all of these voices on social media are real. Some of those accounts might be deliberately trying to spread disinformation.

Disinformation spreads quickly during and after natural disasters, mass shootings, and other dangerous events.

In fact, for more than a decade, Starbird has been researching how misinformation and disinformation spread online during these kinds of crises.

During a crisis, there's a lot of uncertainty and fear, so we start theorizing — or rumoring — on what to do and that rumoring can create misinformation. Then, political actors can either create additional misinformation or amplify existing rumors to spread false information for political reasons. "When there's fear and anxiety, we're acutely vulnerable to politicization, misinformation, and disinformation," she says.

For example, climate science denialists can use natural disasters — such as hurricanes or winter storms — to amplify false information that supports their cause.

Not all this disinformation comes from foreign actors.

"We tend to think about it as foreign and Russian," Starbird says, "but that's going to be a small part of what is going on right now. I think we need to be more aware that the tools and techniques of disinformation are democratized… the same kind of techniques are being used by domestic actors, activists, political operatives and foreign campaigns."

Joan Donovan, Research Director of the Shorenstein Center on Media, Politics and Public Policy, agrees. During Donald Trump's campaign for president, she saw many white supremacists using these techniques to organize. But she also saw advertisers using similar techniques — such as fake communities, fake engagement, and fake reviews.

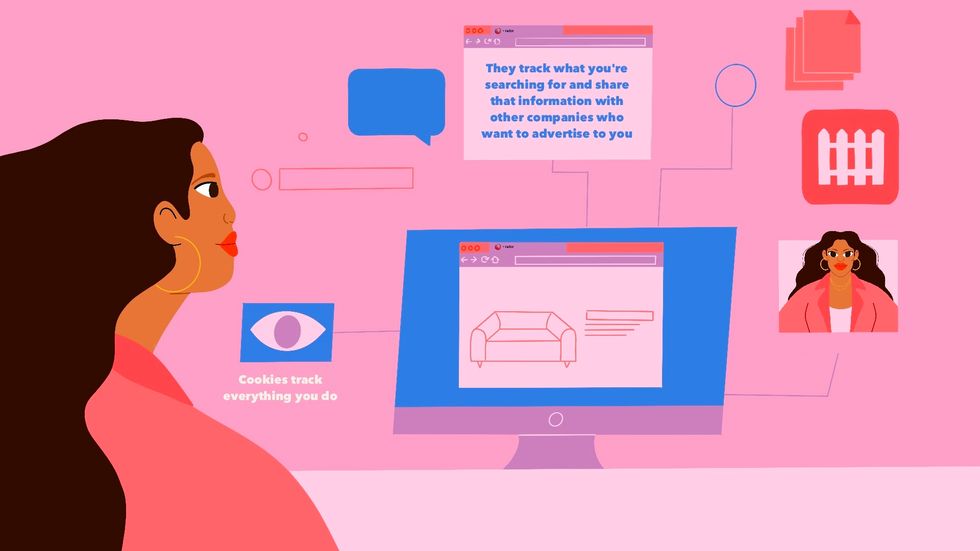

Your personal data can be used in disinformation campaigns too.

Everything you do online generates personal data. Cookies and other web trackers embedded in the sites you visit collect this data when you create social media profiles, buy things online, or simply browse the internet. Many of these cookies then use your data to personalize the ads you see.

"An advertiser can select ads to show you based on the profile they have built from your data," explains Marshall Erwin, Senior Director of Trust and Security at Mozilla, but "these same sophisticated profiles and ad targeting tools allow politicians to slice and dice the electorate. Politicians might have a divisive message that they can target to certain demographics, such as one designed to radicalize white, middle aged men."

This profile can also be used to target you and get you to believe and share disinformation.

If this happened "you'd be getting skewed information targeted towards you based on the customization of the information environment" says Donovan.

This can be especially powerful if you're in a social media echo chamber, where many of your friends and loved one have similar beliefs so you won't see anything contradicting. "If individuals are caught in a media echo chamber and they're not seeking out a diverse set of sources, then they're going to be prone to sharing disinformation, just by the virtue that they're not lumping in the other information that is contradicting what it is that they are seeing," says Donovan. And this helps that disinformation spread to your friends and family.

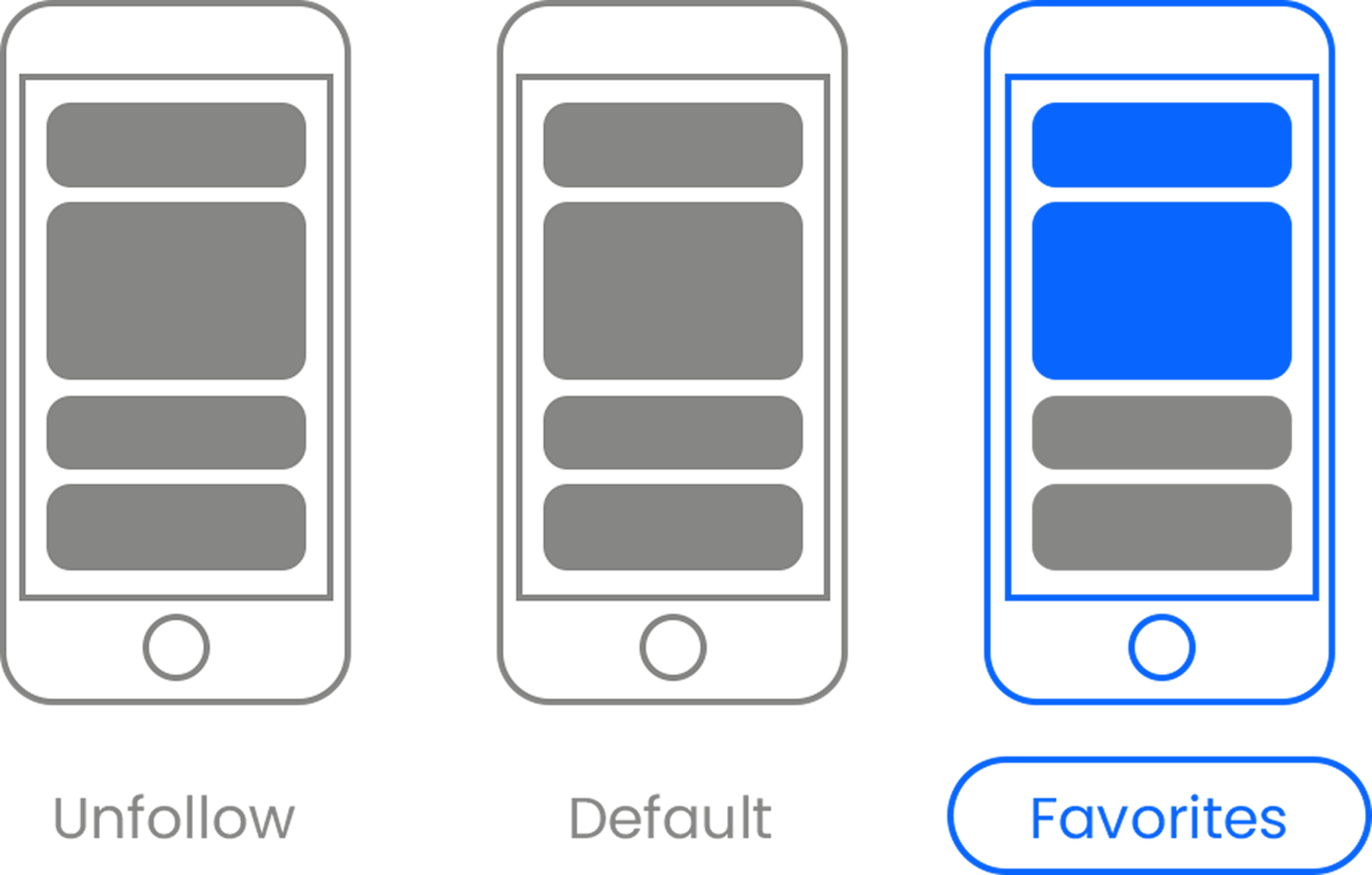

The algorithms on social networks, like Facebook, also use your data and click history to determine which friends you see updates from and which particular news stories shared by those friends you see. This means you're more likely to see friends that think like you and news stories that align with your worldview, thereby creating an echo chamber.

Furthermore, your personal data online could also be used to create fake accounts that seem more legitimate. For example, Donovan says a friend of hers had his wedding photos stolen from Flickr and used as part of a meme campaign against Hillary Clinton.

So how can you protect yourself?

1. Slow down.

"As information participants, we're not just information consumers anymore. We're participants and when we're online, we need to slow ourselves down," says Starbird. Before you retweet, go to the account and look at previous tweets. "Make sure you really want to endorse something that the account is saying."

2. If something you read online seems outrageous, double check that story with other trusted news sources.

"Stories meant to stoke rage and anger coupled with novelty — that is, you're not seeing them elsewhere — are the recipes driving people to share false stories," explains Donovan. "So you read a headline that's "Famous actor says some racial slur," don't share it out of rage if no one else is saying it's true."

3. Know it's hard sometimes to recognize fake accounts — they look real.

"As a researcher who studies this, sometimes we can spend hours — I mean 40 hours or even 100 hours — looking at specific accounts to figure out if this is a real person or if it is an impersonator or a troll from another country."

It's hard because the bad actors that create these accounts spend years "seasoning" them to fool you.

For example, Starbird knows of some accounts by Russian actors that started out simply tweeting real information during natural disasters in order to build their audience. Then, once they had the audience, they started sprinkling in fake information or calling real events fake.

Donovan knows of another account that started as a celebrity gossip profile before changing to political disinformation closer to the 2016 election.

4. When in doubt, dig a little deeper.

If you're still not sure, Donovan says you can use the Wayback machine to see if the account has changed personas in the past.

You can also download the avatar or the banner image on the social media accounts and do a reverse image search to see if the picture shows up elsewhere or if it's real.

If you still can't tell if an account is real, don't follow them.

5. If you make a mistake, correct it.

"If we do spread something wrong, don't just delete it," says Starbird. "Actually go back and let everyone know who might have reshared your post that it was actually wrong. If it's Facebook, actually edit the post and say this is wrong. Let people know that we've made a mistake."

"Journalists have these standards of fact-checking," she continues. "Well, we're all talking about being citizen journalists now, so now we have to take on some of that responsibility if we want to have the privilege."

6. Use the 'flag' tool.

If you think you've found a fake account or you're seeing dangerous, false information online, flag it.

This is safer than trying to dispel information on your own. "If it's a serious disinformation campaign, groups of people who want to remain online will attack you in order to try to shut you down personally," says Donovan.

7. Protect your personal data while you browse online.

If a disinformer is using advertising technology to target you, using a browser that has privacy controls to limit the amount of information collected about you might help. Mozilla, for example, protects users' data by turning on privacy features by default in the Firefox browser and using the Enhanced Tracking Protection feature to prevent known parties from tracking your activity from the sites you visit, therefore limiting their ability to build a profile of you.

You can also use private browsing or incognito mode to clear cookies and your cache.

8. Remember that we all have an important role to play in stopping the spread of disinformation.

"We sometimes have this idea, just like with voting, that we're too small," says Starbird. "'Nothing I do is going to make a big impact, and yet at scale, absolutely it does. Misinformation doesn't spread itself. We spread it, as information participants."

"There is a well-founded fear that pervasive disinformation is undermining our trust in information systems, our trust in our democratic election systems and our trust in each other," she continues. "That can undermine democratic society because if we can't come together with some kind of shared reality and an acceptance of others in our country as legitimate political actors, then we can't come together to govern ourselves. In those conditions, democracy falls apart."

- Trump may not go quietly if he loses the election. He says 'he'll have ... ›

- Alex Jones gets sued for his relentless campaign to torment Sandy ... ›

- This is what a 'post-truth' campaign looks like. - Upworthy ›

- Google settles Incognito mode case - Upworthy ›

Mozilla

Mozilla Mozilla

Mozilla